Recently, we need to perform a HotFix promotion on our Production environment. In HotFix promotion, the vendor who has developed the application has done some major enhancements to fix the bugs on the Application as well as the database. As a pre-requisite, before the HotFix promotion starts, we need to break the LogShipping as well as Transactional Replication. The database on which the HotFix was going to be applied was around 90 GB in size. Setting up the LogShipping again was not a difficult ask but SQL Server setting up the Transactional Replication again did put us in a deep trouble. In this article, I am going to discuss in detail the method which I adopted to set up the Transactional Replication.

SQL Server Versions:

Publisher: OLTP DB Server, SQL Server 2005, Service Pack 3,32 GB of RAM,8 CPU’s

Subscriber: Reporting DB Server, SQL Server 2005, Service Pack3, 16 GB of RAM, 12 CPU’s

Both the above mentioned servers were located in the same DataCenter.

One important point to mention here is that on the Suscriber i.e. the Reporting Server we have created a lot of indexes for better performance of the Select statements. Before I started with the HotFix promotion, I ensured that I have the backup of all the indexes. In order to take the backup of the indexes, I executed the below query against the database named ABC (Database which was involved in Replication) on the Reporting DB Server,

SELECT

ixz.object_id,

tablename = QUOTENAME(scmz.name) + '.' + QUOTENAME((OBJECT_NAME(ixz.object_id))),

tableid = ixz.object_id,

indexid = ixz.index_id,

indexname = ixz.name,

isunique = INDEXPROPERTY (ixz.object_id,ixz.name,'isunique'),

isclustered = INDEXPROPERTY (ixz.object_id,ixz.name,'isclustered'),

indexfillfactor = INDEXPROPERTY (ixz.object_id,ixz.name,'indexfillfactor')

INTO #tmp_indexes

FROM sys.indexes ixz

INNER JOIN sys.objects obz

ON ixz.object_id = obz.object_id

INNER JOIN sys.schemas scmz

ON obz.schema_id = scmz.schema_id

WHERE ixz.index_id > 0

AND ixz.index_id < 255 ---- 0 = HEAP index, 255 = TEXT columns index

--AND INDEXPROPERTY (ixz.object_id,ixz.name,'ISUNIQUE') = 0

AND INDEXPROPERTY (ixz.object_id,ixz.name,'ISCLUSTERED') = 0

ALTER TABLE #tmp_indexes ADD keycolumns VARCHAR(4000), includes VARCHAR(4000)

GO

DECLARE @isql_key VARCHAR(4000),

@isql_incl VARCHAR(4000),

@tableid INT,

@indexid INT

DECLARE index_cursor CURSOR

FOR

SELECT

tableid,

indexid

FROM #tmp_indexes

OPEN index_cursor

FETCH NEXT FROM index_cursor INTO @tableid, @indexid

WHILE @@FETCH_STATUS <> -1

BEGIN

SELECT @isql_key = '', @isql_incl = ''

SELECT --ixz.name, colz.colid, colz.name, ixcolz.index_id, ixcolz.object_id, *

--key column

@isql_key = CASE ixcolz.is_included_column

WHEN 0

THEN CASE ixcolz.is_descending_key

WHEN 1

THEN @isql_key + COALESCE(colz.name,'') + ' DESC, '

ELSE @isql_key + COALESCE(colz.name,'') + ' ASC, '

END

ELSE @isql_key

END,

@isql_incl = CASE ixcolz.is_included_column

WHEN 1

THEN CASE ixcolz.is_descending_key

WHEN 1

THEN @isql_incl + COALESCE(colz.name,'') + ', '

ELSE @isql_incl + COALESCE(colz.name,'') + ', '

END

ELSE @isql_incl

END

FROM sysindexes ixz

INNER JOIN sys.index_columns AS ixcolz

ON (ixcolz.column_id > 0

AND ( ixcolz.key_ordinal > 0

OR ixcolz.partition_ordinal = 0

OR ixcolz.is_included_column != 0)

)

AND ( ixcolz.index_id=CAST(ixz.indid AS INT)

AND ixcolz.object_id=ixz.id

)

INNER JOIN sys.columns AS colz

ON colz.object_id = ixcolz.object_id

AND colz.column_id = ixcolz.column_id

WHERE ixz.indid > 0 AND ixz.indid < 255

AND (ixz.status & 64) = 0

AND ixz.id = @tableid

AND ixz.indid = @indexid

ORDER BY

ixz.name,

CASE ixcolz.is_included_column

WHEN 1

THEN ixcolz.index_column_id

ELSE ixcolz.key_ordinal

END

--remove any trailing commas from the cursor results

IF LEN(@isql_key) > 1 SET @isql_key = LEFT(@isql_key, LEN(@isql_key) -1)

IF LEN(@isql_incl) > 1 SET @isql_incl = LEFT(@isql_incl, LEN(@isql_incl) -1)

--put the columns collection into our temp table

UPDATE #tmp_indexes

SET keycolumns = @isql_key,

includes = @isql_incl

WHERE tableid = @tableid

AND indexid = @indexid

FETCH NEXT FROM index_cursor INTO @tableid,@indexid

END --WHILE

CLOSE index_cursor

DEALLOCATE index_cursor

DELETE FROM #tmp_indexes WHERE keycolumns = ''

SET NOCOUNT ON

SELECT

'IF NOT EXISTS(SELECT * FROM sys.indexes WHERE object_id = OBJECT_ID(N''' + ti.TABLENAME + '''' + ') AND name = N' + '''' + ti.INDEXNAME + '''' + ')' + ' ' +

'CREATE '

+ CASE WHEN ti.ISUNIQUE = 1 THEN 'UNIQUE ' ELSE '' END

+ CASE WHEN ti.ISCLUSTERED = 1 THEN 'CLUSTERED ' ELSE '' END

+ 'INDEX ' + QUOTENAME(ti.INDEXNAME)

+ ' ON ' + (ti.TABLENAME) + ' '

+ '(' + ti.keycolumns + ')'

+ CASE

WHEN ti.INDEXFILLFACTOR = 0 AND ti.ISCLUSTERED = 1 AND INCLUDES = ''

THEN /*ti.Filter_Definition +*/ ' WITH (SORT_IN_TEMPDB = OFF) ON [SECONDARY]'

WHEN INDEXFILLFACTOR = 0 AND ti.ISCLUSTERED = 0 AND ti.INCLUDES = ''

THEN /*ti.Filter_Definition +*/ ' WITH (ONLINE = OFF, SORT_IN_TEMPD

B = OFF) ON [SECONDARY]'

WHEN INDEXFILLFACTOR <> 0 AND ti.ISCLUSTERED = 0 AND ti.INCLUDES = ''

THEN /*ti.Filter_Definition +*/ ' WITH (ONLINE = OFF, SORT_IN_TEMPDB = OFF, FILLFACTOR = ' + CONVERT(VARCHAR(10),ti.INDEXFILLFACTOR) + ') ON [SECONDARY]'

WHEN INDEXFILLFACTOR = 0 AND ti.ISCLUSTERED = 0 AND ti.INCLUDES <> ''

THEN ' INCLUDE ('+ti.INCLUDES+') WITH (ONLINE = OFF, SORT_IN_TEMPDB = OFF) ON [SECONDARY]'

ELSE ' INCLUDE('+ti.INCLUDES+') WITH (FILLFACTOR = ' + CONVERT(VARCHAR(10),ti.INDEXFILLFACTOR) + ', ONLINE = OFF, SORT_IN_TEMPDB = OFF) ON [SECONDARY]'

END

FROM #tmp_indexes ti

JOIN sys.indexes i ON ti.Object_id = i.object_id and ti.indexname = i.name

JOIN sys.filegroups fg on i.data_space_id = fg.data_space_id

WHERE LEFT(ti.tablename,3) NOT IN ('sys', 'dt_') --exclude system tables

ORDER BY

ti.tablename,

ti.indexid,

ti.indexname

DROP TABLE #tmp_indexes

On the Reporting server, the indexes were kept on a separate dedicated SAN whose size was 110 GB and RAID 10. The indexes were allocated to the Secondary File Group just to ensure excellent performance.

The replication Snapshot Agent files was configured to reside under the following path on the OLTP DB server,

D:\Microsoft SQL Server\MSSQL.1\MSSQL\repldata\unc\ABC\20111113144542

The drive D was a SAN drive of RAID 10 which was holding the SQL Server Data Files as well. Before I started setting up the Replication process again, the free space available on the D drive of the server was 50 GB.

I created the Publisher as well as the Subscriber using the wizard. Once completed, I Started the Snapshot Agent. Once the Snapshot got created, I was surprised to see that the free space available on the D drive was just a mere 8 GB. This being a Production OLTP database server I was in some sort of worry at this stage.

Once the Snapshot got created, I started the Log Reader Agent so that the system starts generating the Replicated Commands which worked well. I then started the Synchronisation status so that the corresponding database on the subscriber starts receiving the Replicated Commands. 5-6 hours passed by but still the subscriber wasn’t able to complete the process of receiving the Replicated commands. When I viewed the synchronization status of the subscriber, I noticed the following error message:

The process is running and is waiting for a response from the server.

Since I have already lost a huge amount of time and with just 8-9 hours remaining for my users to come ONLINE, I decided that there is a need to change the strategy to set up the Replication process. I stopped the Log Reader Agent as well as the Synchronization Agent manually.I then manually deleted all the files which was present under the path,

D:\Microsoft SQL Server\MSSQL.1\MSSQL\repldata\unc\ABC\20111113144542

This was done to free up the space on the D drive of the OLTP DB Server.

I then took the Full Backup of the database which was acting as a publisher using the below T-SQL

BACKUP DATABASE ABC TO DISK=’B:\DB-BACKUPS\ABC\Full\ABC_Full.bak’

Once the Full Backup got completed, I started transferring it onto the Reporting Server which was acting as a subscriber. Since both the OLTP as well as the Reporting Server were located in the same Data Center with a very good Bandwidth as well as the N/W speed, it took around 5 hrs and 47 minutes for me to copy the backup onto the Reporting Server.

Once copied successfully, I started Restoring it on the Reporting Server using the below T-SQL.

RESTORE DATABASE ABC FROM DISK='B:\GLODBBACKUPS\ ABC _Full.bak' WITH MOVE 'ABC_Data' TO 'D:\Microsoft SQL Server\MSSQL.1\MSSQL\Data\DR\ABC_Data.mdf', MOVE 'ABC_Log' TO 'L:\Microsoft SQL Server\MSSQL.1\MSSQL\Data\DR\ABC_Log.ldf', NORECOVERY, STATS=10, REPLACE GO

RESTORE of Full Backup was done with NORECOVERY option so that I can restore additional backups over it; this will ensure that I have latest data on the subscriber while setting up the Replication procedure.

While the Full Backup was getting Restored on the subscriber, I started taking the Differential Backup of the same database i.e ABC on the Publisher itself i.e OLTP Database Server using the below T-SQL,

BACKUP DATABASE ABC TO DISK=’B:\DB-BACKUPS\ABC\Differential\ABC_Differential.bak’ GO

The size of the differential backup was very small, it was a mere 6 GB and by the time the Full Backup got completed, the differential backup got copied onto the subscriber successfully.

I then started restoring the Differential Backup over the database named ABC present on the subscriber using the below T-SQL.

RESTORE DATABASE ABC FROM DISK='B:\GLODBBACKUPS\ ABC_Differential.bak ' WITH MOVE 'ABC_Data' TO 'D:\Microsoft SQL Server\MSSQL.1\MSSQL\Data\DR\ABC_Data.mdf', MOVE 'ABC_Log' TO 'L:\Microsoft SQL Server\MSSQL.1\MSSQL\Data\DR\ABC_Log.ldf', NORECOVERY, STATS=10 GO

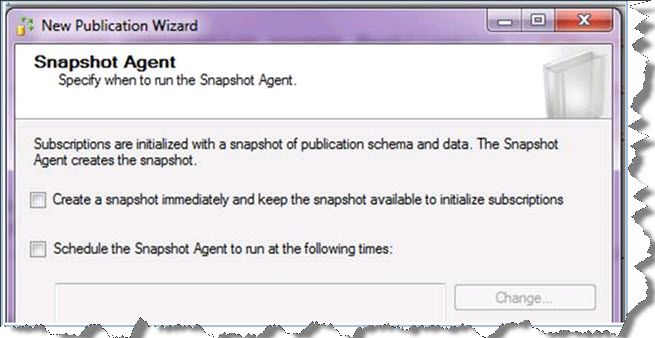

Once the differential backup got restored, I created the Publisher using the wizard itself but one important point to consider here is that I never set up any sort of settings for the Snapshot Agent at all, please refer the screen capture below:

The next step was to enable the publication to allow initialization from a backup file. This was done by executing the below T-SQL against the distribution database on the Publisher itself i.e OLTP Database Server.

EXEC sp_changepublication @Publication=ABC, @property = N'allow_initialize_from_backup', @value = True

I then took the latest Transactional Log Backup of the database named ABC which was present on the OLTP Database Server and then restored the same onto the database named ABC which was present on the subscriber with RECOVERY option. RECOVERY was used so that the database comes ONLINE. Restoration was done using the below T-SQL.

RESTORE DATABASE ABC FROM DISK='B:\GLODBBACKUPS\ ABC_Transactional.bak ' WITH MOVE 'ABC_Data' TO 'D:\Microsoft SQL Server\MSSQL.1\MSSQL\Data\DR\ABC_Data.mdf', MOVE 'ABC_Log' TO 'L:\Microsoft SQL Server\MSSQL.1\MSSQL\Data\DR\ABC_Log.ldf', RECOVERY, STATS=10 GO

The next step was to add the subscription using the below TSQL which was executed against the database named ABC on the OLTP DB Server i.e. the publisher.

exec sp_addsubscription @publication = N'ABC', @subscriber = N'PQRS1234', @destination_db = N'ABC', @subscription_type = N'Push', @sync_type = N'initialize with backup', @article = N'all', @update_mode = N'read only', @subscriber_type = 0, @backupdevicetype ='disk', @backupdevicename = ‘B:\DB-BACKUPS\ABC\Transactional\ABC_Transactional.bak’

I then manually executed all the indexes scripts which were generated as discussed in the beginning of the article itself against the database named ABC on the Reporting server i.e. the subscriber.

I then started the LogReader agent and noticed that the Replicated Commands were getting generated properly.

I then viewed the synchronization status of the subscriber and noticed that the Replicated commands were getting delivered properly onto the subscriber, please refer the screen capture below:

This ensured that the entire process worked well. It also ensures that the subscriber will have the same indexes as well as statistics as the publisher itself. In general, I would normally recommend using the above approach for setting up the Transactional Replication for databases which are huge in size. It saves a lot of time and even gives a lot of ease to the DBA as well.

My sincere thanks to all the readers for giving their valuable time in reading the article. Any feedback, alternative approach to the same will be Highly Appreciated. My sincere thanks to the editor Amit Bansal and the entire Support team at Larsen&Toubro Infotech for providing their valuable guidance which helps me to present some interesting Real World scenarios which I encounter in my Daily Work to the Community.

Regards

Satnam Singh

Like us on FaceBook | Follow us on Twitter

Follow me on FaceBook| Join the fastest growing SQL Server group on FaceBook

What was the size of backup file (5hr 47 min in same lan)

What is ‘PQRS1234’….Machine name???

I haven’t started replication via a backup yet, so I have a question. From the time that the log backup was taken, and the time the log reader agent was started, aren’t those lost transactions?

I dont think we can have a down time up to 5 to 6 hours in the real time to set up replication and this process seems to be a lenghty one. Like everyone knows , we use replication only to replicate objects instead of all the database ( Full Backup doesn’t make any sense ). I would suggest, Replication without Snapshot for this scenario.

Thanks for all the details. I have always wondered how to set up replication using the backup restore method. I am going to try this one in my test environment and see how it works. The only problem I have is that we do not need all the tables on the subscriber so will have to figure a way to drop those once replication is working.

Iam very happy to see this blog that to this info is very helpful for me. I am very much impressive with this website. Thanks a lot for visiting the nice info in this blog that to using the amazing technology in this blog.

when i tried add subscription with pull, i am getting error like “uninitialized subscription”

do we have to use push subscription always for this scenario??